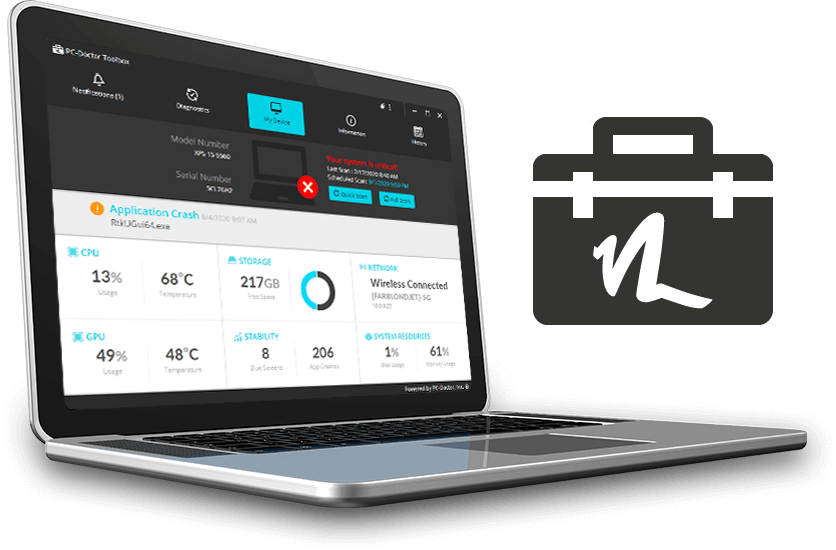

We are pleased to announce the release of Service Center 16 and Service Center 16 Drive Erase! The gold standard in hardware diagnostics has been elevated with a complete UI framework overhaul providing snappier response times and cleaner UI rendering. Plus, Service Center now supports saving diagnostics results, system information, and reports in PDF format!

Continue reading